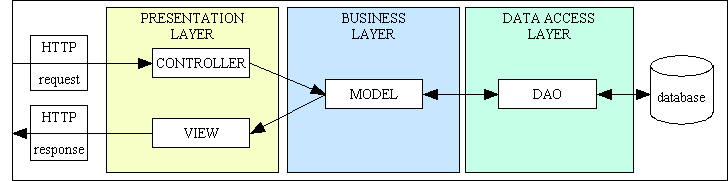

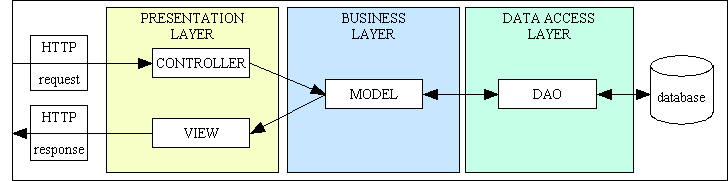

Figure 1 - The MVC and 3-Tier architectures combined

In October this year I came across several articles written by Yegor Bugayenko called What's wrong with OOP, MVC vs. OOP and SOLID Is OOP for Dummies in which a person calling himself Hall of Famer made certain statements which I deemed worthy of comment. Below is a summary of the exchanges which followed.

In this post Hall of Famer said the following:

There is something very wrong with procedural programming, with procedural programming you always end up with amateurish spaghetti code.

Having spent over a decade using that well-known procedural language called COBOL, and having being on a Jackson Structured Programming (JSP) course and learned about Modular Programming and Structured Programming I considered his statement to be flawed, so I countered his view with this reply in which I stated the following:

I disagree. The opposite of spaghetti code is structured code, and it is possible to write structured code in a procedural language just as it is to write spaghetti code in an OO language.

Hall of Famer then replied with this:

Nope it is not, procedural programming always leads to unstructured spaghetti code. If you write in a procedural language with modular design approach, it is actually OO design. Procedural design is spaghetti code, theres no way to write good procedural code. Again note the difference between procedural syntax and procedural design. With C you have to stick with procedural syntax with no OO support natively, but the design approach still can be OO.

I responded with this:

Did you come up with that idea yourself, or can you point to any articles which support that claim? The definition of spaghetti code includes the word "unstructured", so procedural code which is properly structured is NOT spaghetti code. Procedural code which is structured is NOT OO design as OO requires encapsulation, inheritance and polymorphism. Structured programming, as taught by Michael Jackson, is based on sequence, iteration and selection. Besides, OO design did not exist in the 1970s and 80s when I was using COBOL.

He immediately came back with the following:

Not really, many people have thought of this in the past. The OO design approach really, doesn't necessarily require OO syntax. It is a methodology, and the OO mindset is far more important than OO syntax itself. Just because you use objects doesn't mean you write OO code, procedural code encapsulated in objects ain't OO code.

And nope, there is no way to write properly structured procedural code. Procedural code = spaghetti code, you again had a confusion between procedural code and code written in procedural language. By properly structured I mean application-level design and architecturing, not just writing structures such as control structures, loops and functions. Just because your application has a structure, doesn't mean its 'properly' structured.

I could not let such ridiculous statements go by, so I replied with the following:

The OO design approach really, doesn't necessarily require OO syntax.You are confusing OO design with OO programming. A design is, or should be, language agnostic as it describes the application before it is built. A good design should be able to be implemented in any language, procedural or OO.

OO programming can only be achieved with a language which directly supports the OO principles of encapsulation, inheritance and polymorphism. If you use those features then your code is OO. If you don't use those features then your code is not OO.

procedural code encapsulated in objects ain't OO codeThere is no difference between writing code in a procedural function and writing code in a class method. They are both concerned with the writing of imperative statements which are executed in a linear fashion. If I write code which is oriented around objects then that automatically makes that code "object oriented" whether you like it or not. However, when code is actually executed the CPU cannot identify the style, or even the language, in which it was written as the compiler converts the human-readable instructions into computer-readable instructions.

there is no way to write properly structured procedural codeThere is no such thing as "properly structured", there is either "badly structured" or "well structured". "Properly structured" is an entirely subjective description which means different things to different people. Spaghetti code is code which either has no structure at all, or it is badly structured. It is possible to write COBOL code which is well structured just as it is possible to write Java code which is badly structured spaghetti.

you again had a confusion between procedural code and code written in procedural languageI disagree. If you write code in a procedural language it is procedural. If you write code in an OO language and implement encapsulation, inheritance and polymorphism then your code is OO.

Let me expand on some of the points he has made so far:

If you write in a procedural language with modular design approach, it is actually OO design

I disagree. Modular programming was practiced in procedural languages long before OOP and OOD came on the scene. OO design requires the use of Object Oriented Concepts such as Objects/Classes, Information hiding, Inheritance, Interfaces and Polymorphism. Modular design does not, so to say that the two are the same is plainly wrong.

The OO design approach really, doesn't necessarily require OO syntax

I disagree. If you look at the description of Object Oriented Concepts in OOD you will see that it requires support for OO concepts in the language. None of these concepts existed in the COBOL versions that I used, so I cannot see how it is possible to use OOD for a language that does not support OO concepts.

It is a methodology, and the OO mindset is far more important than OO syntax itself.

I disagree. OOP is not a state of mind, it is an implementation of OO concepts. You cannot implement OO without OO syntax, and if you use OO syntax in your programs then it is OOP.

Just because you use objects doesn't mean you write OO code

I disagree. If you write programs which are oriented around objects then you are, by definition, doing Object Oriented Programming. It may not be the most efficient or the most effective, but it is still OO.

Code which has a structure is not 'properly' structured

I disagree. Either code has a discernible structure which can be shown in a structure chart or it does not. It is either spaghetti code (or ravioli code in the case of OOP) or it is not. This is a boolean condition in which the result is either TRUE or FALSE, YES or NO. There is no "yes it is but no it isn't".

This person does not understand the difference between "procedural" and "object oriented". There is a large amount of functionality which exists in both. OO code is exactly the same as procedural code except for the addition of encapsulation, inheritance and polymorphism. Both paradigms have lines of code containing imperative statements which are executed in a linear fashion. Both paradigms support expressions, operators, control structures, built-in functions and user-defined functions. Both paradigms support the concept of Modular Programming and Structured Programming. One paradigm supports encapsulation, inheritance and polymorphism while the other does not.

In my reply I also responded to a previous question of his:

Oh yeah, do you happen to be the same Tony Marston who made a fool of himself on SitePoint some time ago?I have often been criticised for my different views, which have sometimes been described as heretical, but I am allowed to have a different view, just like everyone else on this planet. I do not care that my views are different as I write software which pleases my paying customers, not a bunch of ignorant or confused developers.

He followed up with this reply:

I see, so you really are that Tony Marston who keeps embarrassing himself. Of course you are allowed to have different viewpoints, different doesn't necessarily mean bad. But your viewpoints are mostly incorrect, inferior and confused, yet you call the other developers ignorant and confused developers, when you are exactly ignorant and confused yourself. People criticize you not because you have those incorrect opinions, but that you try to convince them that your opinions are good when they clearly are bad. This is exactly why everyone was against you in that Singleton vs Dependency Injection thread.

For those of you who are in the dark, my opinions on dependency injection were published in Dependency Injection is EVIL. If you read it carefully you will notice that I actually say that DI can be beneficial in appropriate circumstances, but that it should not be applied indiscriminately. In my applications there are places where I DO use DI, but there are also places where I DO NOT.

I responded to his post with this:

I see, so you really are that Tony Marston who keeps embarrassing himself.I am not embarrassed in the least. I find the criticism of my work to be very amusing. I sometimes laugh so much I can feel the tears running down my trouser leg.

Of course you are allowed to have different viewpoints, different doesn't necessarily mean bad.How very kind of you. It makes a change from being told that my opinions are bad simply because they are different.

But your viewpoints are mostly incorrect, inferior and confusedThen how come the code which I write using my "incorrect, inferior and confused" methods still produces applications which not only work, but work very well. All without the use of those abominations called dependency injection and object-relational mappers.

In this post I made the following statement:

I said your opinions are bad because your opinions are incorrect, outdated and confused, not because they are different.My opinions are not incorrect for the simple reason that the code which I create most definitely works, and has done for over a decade. My opinions are not outdated simply because I refuse to accept all the add-on definitions to OOP which have been dreamt up by a bunch of pseudo intellectuals. OOP consists of nothing more than encapsulation, inheritance and polymorphism, and everything else is an optional extra.

I have made a list of the OO add-ons which I ignore in A minimalist approach to Object Oriented Programming with PHP.

He made yet more erroneous statements in this reply to which I responded with the following:

Well just because your code works for you, doesn't mean your opinions are correct.If my methods produce cost-effective software which works then those methods cannot be wrong.

Your code works for your legacy applications/frameworksIf by "legacy" you mean "mature" and "proven" then that is precisely what my customers want. Large corporations do not want leading edge, bleeding edge, immature and unproven applications, they want something with a pedigree. That is what I provide.

your legacy PHP 4 applicationFYI it currently runs on PHP 7, and has run through all versions of PHP 5. It is as current as it needs to be.

And those are NOT add-on definitions of OOP, they are fundamental and universally agreed concepts.I suggest you look up the dictionary definition of "fundamental". In http://dictionary.cambridge.org/dictionary/english/fundamental it is described as "forming the base, from which everything else develops". The original definition of OOP by Alan Kay (who invented the term) specifies nothing more than encapsulation, inheritance and polymorphism, so everything which came after that IS an addition and IS optional. The fact that some people consider that those optional extras are an essential part of OOP just shows that it is THEY who are confused, not me.

In fact, the more confused, old-fashioned and incompetent you are, the more counter-examples I will have when I teach people how to avoid bad habits. In a way, I should thank you for this.And I should thank you for encouraging techniques that will prevent any programmer from creating software which will be a serious competitor to mine. The true purpose of a software developer is to develop software which impresses the paying customer with its cost-effectiveness, not its ability to impress a bunch of developers with its fashionable yet overly-complex implementation. I sell my enterprise application to large corporations all over the world, so next to that monster which you and your cohorts would produce my software would appear sleek and slim.

In this post he made the following statements:

Its funny that you referenced Alan Kay when you did not even understand what Alan Kay's vision of Object and OOP are. Alan Kay's idea for Objects are actor models, which communicate with each other by sending messages. Even with the 3 basic characteristics of OOP you still fail miserably. Your singleton breaks encapsulation, your inheritance is totally wrong when you inherit 9000 lines of base class, and your polymorphism is nowhere to be found. Either way you are confused about OOP and you are at the bottom of your heart, a procedural programmer.

I asked him to provide a link to any article written by Alan Kay in which he said that OOP is about sending messages, but he could not.

I asked him to provide a link to any article which explained how a singleton could possibly break encapsulation, but he could not.

In this post he made some more questionable statements:

Yes, your singleton breaks encapsulation, it always does. When you use singleton, the object stored as singleton becomes a glorified global variable. When your client code uses singleton, it becomes a hidden dependency that cannot be tested or maintained. Yes, your inheritance is done wrong for that 9000 lines of God class, because your parent class breaks SRP and your child classes are mere dumpers for the garbage from this god parent class. And your polymorphism is indeed nowhere to be found, because you have done inheritance wrong in the first place, and blindly giving all responsibilities to all child classes ain't the right way to do polymorphism.

How so? In OOP inheritance is the primary method for sharing reusable code. In my main enterprise application there are over 400 database tables for which I have a separate class. Each class contains the business rules for a single designated database table. There is a lot of code which I could duplicate in each table class, but instead I moved all that code into an abstract table class which is then inherited by every concrete class. There is no limit to the amount of code which can be shared this way. How can this possibly be the "wrong" use of inheritance? There is NO limit on the amount of code which an abstract class may contain. In fact, if you looked at the objectives of OOP you would see that it actually encourages the creation of more reusable code, not less.

In his article Object Composition vs. Inheritance the author Paul John Rajlich wrote the following:

Most designers overuse inheritance, resulting in large inheritance hierarchies that can become hard to deal with. Object composition is a different method of reusing functionality.

....

However, inheritance is still necessary. You cannot always get all the necessary functionality by assembling existing components.

....

The disadvantage of class inheritance is that the subclass becomes dependent on the parent class implementation. This makes it harder to reuse the subclass, especially if part of the inherited implementation is no longer desirable. ... One way around this problem is to only inherit from abstract classes.

Guess who doesn't overuse inheritance by creating large inheritance hierarchies? Me!

Guess who avoids this problem by only inheriting from abstract classes? Me!

So if I AM NOT doing what this author suggests is bad, and I AM doing what he suggests is good, how can you possibly say that I am wrong?

By having each concrete table class inherit from a single abstract table class this allows me to make extensive use of the Template Method Pattern which was described in the Gang of Four book as being "a fundamental technique for code reuse". If increasing the amount of reusable code is one of the aims of OOP, and I am using a well-known design pattern which achieves this, then how can you possibly say that I am wrong?

I have written more on this topic in the following:

Then you obviously haven't looked very far. Polymorphism becomes available when the same method signature is shared by many different objects, which then allows a piece of code which calls that method to work on any object which implements that method. In my framework every one of my page controllers calls methods on each Model object which are defined in the abstract table class. As I pointed out in the previous section that abstract class is inherited by every one of my 400 table classes, which means that every one of my 40 Controllers can be used with every one of my 400 Models. How can this situation NOT be described as polymorphism?

Now do the maths - if I have 40 controllers each of which can be used on any of my 400 Model classes then that results in 45 x 400 = 18,000 opportunities for polymorphism. Do you see that - EIGHTEEN THOUSAND instances of polymorphism which you failed to spot. Are you blind, or just intellectually challenged?

In this post he came up with the following:

Yes, your singleton breaks encapsulation, it always does. When you use singleton, the object stored as singleton becomes a glorified global variable. When your client code uses singleton, it becomes a hidden dependency that cannot be tested or maintained.

I'm sorry to burst your bubble, but the two terms are NOT connected:

Defining a class, instantiating a class and storing a single instance of a class are different and unrelated activities.

In this post he said:

With Singletons, you kill polymorphism since its impossible to swap implementation of this class.

I countered that argument with the following:

Rubbish. Take a look at the following code:<?php $class_name = "foo"; $object = singleton::getInstance($class_name): $result = $object->getData(); ?>Here I can change the contents of the variable$class_name, obtain a singleton of the associated object, then call a method on that object without any problem whatsoever. I have been doing just that in my framework since 2005, so don't tell me it can't be done.

As you should be able to see, provided that the specified class contains the getData() method (which every one of my concrete table classes does by virtue of the fact that they all inherit from the same abstract table class which contains that method), this code will work.

I'm sorry to rain on your parade, but the two terms are NOT connected:

Instantiating a class into an object and calling an object method are different and unrelated activities.

In this post he wrote:

MVC on its own is not a complete architectural pattern, it is rather a subpattern suited to the UI layer.

I disagree, and so do the authors of all the articles I have read about this pattern. The View and Controller belong in the UI/Presentation layer, and the Model clearly belongs in the Business/Domain layer. The main reason that database access was not separated out of the Model was either because the application did not access a database (it may have been used to manipulate an image, for example), or that the thought of being able to switch from one database to another was not regarded as an option worthy of serious consideration.

MVC on its own is an example of a 2-Tier Architecture. When you combine it with the 3-Tier Architecture you get the structure shown in Figure 1. This could be called MVCD, or Model-View-Controller-DAO.

In this post he said the following:

MVC in general is an incomplete architectural pattern that only distinguishes three components in your application, but not all. In a smaller and simpler application, sure M, V and C themselves alone are sufficient. In more complex applications, you need more components, such as DAO, Service Objects, Helper Objects, etc.

I disagree. I have been writing database applications in PHP since 2003, and my application has grown from 35 tables to over 400. That growth has not necessitated any new layers/tiers, just an increase in the number of objects in the existing layers:

In my application all business/domain logic is split across the numerous Model classes, with each Model containing only those business rules which apply to that entity. I do not put business rules into separate service or helper objects as that would break encapsulation.

I disagree. The definition of a God object contains the following:

A common programming technique is to separate a large problem into several smaller problems (a divide and conquer strategy) and create solutions for each of them. Once the smaller problems are solved, the big problem as a whole has been solved. Therefore a given object for a small problem need only know about itself. Likewise, there is only one set of problems an object needs to solve: its own problems.

In contrast, a program that employs a god object does not follow this approach. Most of such a program's overall functionality is coded into a single "all-knowing" object, which maintains most of the information about the entire program, and also provides most of the methods for manipulating this data. Because this object holds so much data and requires so many methods, its role in the program becomes god-like (all-knowing and all-encompassing). Instead of program objects communicating among themselves directly, the other objects within the program rely on the single god object for most of their information and interaction. Since this object is tightly coupled to (referenced by) so much of the other code, maintenance becomes more difficult than it would be in a more evenly divided programming design. Changes made to the object for the benefit of one routine can have unintended effects on other unrelated routines.

A god object is the object-oriented analogue of failing to use subroutines in procedural programming languages, or of using far too many global variables to store state information.

Note the use of the words "most of a program's overall functionality" and "single object". The class in question does not actually create a single object. It is an abstract class, and therefor cannot be instantiated into an object. It is inherited by every one of my table classes (I currently have 400, but this number increases when I add new functionality) in order to provide standard code for accessing any unspecified database table. Each concrete class inherits all the standard code from the abstract class and need only contain code which is specific to that table. Note also that all the methods in my abstract class are non-abstract, which means that they contain implementations as well as signatures, and I don't have to define them in any concrete class unless I want to override their implementations.

Saying that my abstract class is a God object simply because of its size proves nothing except that you can count, but that you either cannot read or cannot understand what you read. My abstract class does not exhibit the characteristics or symptoms of a God class as described in that article, so your accusation is without substance. It may have 9,000 lines, but that includes blank lines and comments. These are split across 254 methods, so that gives an average of about 35 lines per method. These methods can be categorised as follows:

If you don't understand how these methods are used at runtime then take a look at the diagrams in UML diagrams for the Radicore Development Infrastructure.

My abstract class may have more methods than you are used to, or more than you can possibly imagine, but that's simply because your imagination is smaller than mine, your capabilities are smaller than mine, and your applications are smaller than mine. Or, to put it another way, compared to mine your imagination is puny, your intellect is puny, and your applications are puny. I develop man-sized applications for the enterprise, you develop toys for boys. If you don't have the mental capacity to deal with a class which has that number of methods, then how can you have the mental capacity to deal with the same number of methods split across multiple classes? You are not increasing the readability of the code, you are replacing highly cohesive code with ravioli code, which makes navigation through the code for maintenance purposes more difficult.

The true definition of a god class contains the phrase "Most of such a program's overall functionality is coded into a single 'all-knowing' object". While you may think that 9,000 lines is a lot, it is just a small part of the 53,000 LOC that exist in my reusable library. This means that my abstract class contains 9/53rds or 17% of the overall functionality. I don't know who taught you maths, but 17% cannot be described as "most" in anybody's language.

One of the characteristics or symptoms of a true God class is that if the application expands then the God class expands with it. The application cannot be expanded without amending the God class. This situation simply does not exist my framework. In the past 10 years my enterprise application has expanded from 35 database tables to over 400, and as my so-called God class does not contain any references to any tables it is totally unaffected by their number. When I add a new table to my application all I do is create a new table class which inherits from, not expands that so-called God class. I then create as many user transactions as I want from my library of Transaction Patterns, each of which will reference that table class using generic methods which were defined in that so-called God class. Did you read that? Each new table class inherits from the so-called God class, it does not cause it to expand in the slightest. So if that class does not expand when the application expands it does not suffer the symptoms of a God class, therefore it does not deserve to be called one.

You may be confused by the fact that in order to implement inheritance you have to use the word "extends" in your code, as in concrete_class extends abstract_class, and this word can have different meanings depending on the context. For example, if you "extend" a house by adding on a conservatory, a new wing or new floor, the end result is that the house itself becomes bigger. This is not what happens in OOP. The word "extends" should have been replaced with "inherits" so that what you see is that the original abstract class is completely unchanged, and that what you have actually done is create a totally new class which incorporates a copy of the abstract class, but with some additions. To continue with the house analogy, the original house is unchanged, but what you end up with is a copy of that house with the addition of a conservatory, wing, or floor.

This sort of confusion is not new in the software world. Do you remember Hungarian Notation? This was invented by a Microsoft programmer called Charles Simonyi, and was supposed to identify the kind of thing that a variable represented, such as "horizontal coordinates relative to the layout" and "horizontal coordinates relative to the window". Unfortunately he used the word type instead of kind, and this had a different meaning to those who later read his description, so they implemented it according to their understanding of what it meant instead of the author's understanding. The result was two types of Hungarian Notation - Apps Hungarian and Systems Hungarian. You can read a full description of this in Making Wrong Code Look Wrong by Joel Spolsky.

In this post Hall of Famer made the following statement:

I was saying that, there may be some child classes of your Default_Table that will never use functionality such as file uploading. It is not that the functionality 'may be' used, its never used. You said that you have controllers such as FileUploadingController, PDFController and CSVController. But then there are some child classes that do not support File Uploading, PDF/CSV conversion, then the functionalities from your Default_Table abstract class are completely useless to these child classes. This is completely opposite to what OOD is meant to be, how is it even considered an 'abstract' class if it tries to do everything? It looks more like a Utility class to me lmao. Your confusion arises from that you believe the methods are appropriate because they are used by one of the controllers, but this is not the correct criterion. Your controllers use child classes of Default_Table, not Default_Table itself. So if the methods are not used by certain child classes, it means the child classes should not inherit this method, and your God class is doing too much.

There is no rule which supports your ridiculous claim. Can you provide a URL which describes this rule? No, I thought not. If there is no such rule then I am perfectly within my rights to ignore it, and I choose to exercise that right.

You seem to be a follower of the traditional approach whereby you have one Controller for each Model, where you have to build each Model class by hand, then build - again by hand - a Controller which can access that Model. In such a method it is possible for the Model to have only those methods that are needed by that Controller, and for the Controller to actually use all of those methods.

I call this the "neanderthal approach" as it fails to meet one of the primary objectives of OOP which is to create more reusable code. There is no reusability if you have to build each Model and each Controller by hand. It also means that if you want to add extra functionality at a later date you have to modify both the Model and the Controller.

My method is far superior. I do not have to build any Models by hand as they are generated by my Data Dictionary. Each concrete table class starts off by inheriting all the standard code in the abstract table class, with a few lines in the class constructor to identify the table's name and its column details. I do not have to write any code to validate user input before it is sent to the database as that is handled automatically within the framework. I do not have to build any Controllers by hand as my framework contains a library of pre-built and reusable Controllers, with a separate controller for each Transaction Pattern. When I want to create a user transaction which does something with particular database table I simply go to my Data Dictionary, select that table, select a Transaction Pattern then press a button. This will generate one or more component scripts and add records to the MENU database which allow that/those user transactions to be run immediately.

Note these differences between your neanderthal approach and mine:

My approach requires less effort, which means that it makes me far more productive. Your method is a relic of the stone age and is unfit for the 21st century.

I disagree. The Single Responsibility Principle (SRP), also known as Separation of Concerns (SoC), states that an object should have only a single responsibility, and that responsibility should be entirely encapsulated by the class. All its services should be narrowly aligned with that responsibility. But what is this thing called "responsibility" or "concern"? How do you know when a class has too many and should be split? When you start the splitting process, how do you know when to stop? In his article Test Induced Design Damage? Robert C. Martin (Uncle Bob) provides this description:

How do you separate concerns? You separate behaviors that change at different times for different reasons. Things that change together you keep together. Things that change apart you keep apart.

GUIs change at a very different rate, and for very different reasons, than business rules. Database schemas change for very different reasons, and at very different rates than business rules. Keeping these concerns (GUI, business rules, database) separate is good design.

I don't know about you, but I recognise the separation of GUI, business rules, and database access as the description of the 3-Tier Architecture, upon which my framework is based. Not only that, because I have also split my Presentation layer into two smaller components, a Controller and a View, it is also an implementation of the Model-View-Controller design pattern. This produces the structure shown in Figure 1:

Figure 1 - The MVC and 3-Tier architectures combined

My abstract class is inherited ONLY by Model classes, and contains absolutely NO logic which rightly belongs in a Controller, View or DAO, so for you to say that it does too much and therefore breaks SRP cannot be supported by the facts. It does a lot, and perhaps it does more than you are used to, but that is no reason to assume that it breaks SRP. Besides, if I were to break that abstract class into smaller units I would be breaking encapsulation and the concept of cohesion, and those would be mistakes of far greater magnitude.

You should also note that SRP is all about the separation of logic, not the separation of information. Logic is code while information is data. Taking the data for an entity and splitting it across several classes would violate encapsulation and would therefore be wrong. You should also note that you should not split the logic across several objects unless you can give that logic a sensible (and short) name and fit it into a structure diagram as shown in Figure 1. Such terms as "Service" and "Helper" would be meaningless. If your method produces a structure that has so many objects that you cannot fit it into a single page diagram, or you cannot give each object a meaningful name, then you have probably gone too far. Splitting a monolithic piece of code into smaller parts is a good idea, but you should have the intelligence to know what to separate out and when to stop separating. The idea that you should extract until you drop I consider to be too ridiculous for words.

There follows a long exchange of argument and counter-argument which proves nothing except for the fact that we disagree on almost everything. He then adds to his list of stupid arguments in this post in which he said the following:

I already looked at your application architecture, and although it breaks down into 3 tiers, each tier of your application still contains a handful of god classes that do too much.

Excuse me, it is simply not possible for an application to have more than one God class. The definition of a God class clearly states "Most of such a program's overall functionality is coded into a single 'all-knowing' object". The word "single" should tell you that there cannot be more than one. The word "most", which equates to "more than 50%", should tell you that there cannot be multiple objects each containing more than 50% of the code. It is simply not mathematically possible to have multiple god objects. I don't care who you are, you are not allowed to redefine words, especially simple words such as "single" and "most", in order to promote your personal agenda.

How can I possibly say such a thing? The principle of encapsulation can be described as follows:

The act of placing an entity's data and the operations that perform on that data in the same class. The class then becomes the 'capsule' or container for the data and operations.

Note that this requires ALL the properties and ALL the methods to be placed in the SAME class. Breaking a single class into smaller classes so that the count of methods in any one class does not exceed an arbitrary number is therefore a bad idea as it violates encapsulation and makes the system harder to read and understand. It would also decrease cohesion and increase coupling which would be the exact opposite of what should be achieved.

As you should be able to see, if I moved some methods to another class then I would be ignoring the ALL part of that description, and as far as I am concerned that cannot be justified under ANY circumstances.

There are some numpties out there who create artificial rules such as "a class should not have more than N methods" and "a method should not have more than N lines of code" where N is a totally arbitrary number (usually 10, but I have seen lower). If I were to follow this "advice" and split the 138 public methods across 13 different classes then what would be the result?

This situation would therefore be adding to the maintenance burden instead of alleviating it, so should not be recommended by any sane person at all.

SRP makes no mention of size, so I regard any such limitation as artificial and feel no obligation to be bound by it.

How can I possibly say such a thing? The principle of cohesion can be described as follows:

Cohesion is a measure of the strength of association of the elements inside a module. A highly cohesive module is a collection of statements and data items that should be treated as a whole because they are so closely related. Any attempt to divide them would only result in increased coupling and decreased readability.

Simply put, this states that functions/methods which are related should be contained within the same class, and that a class should only contain functions/methods which are related. Putting all functions into a single class would be just as wrong as putting each function into a separate class. The correct grouping of functions requires a modicum of intelligence, and all evidence points to the fact that this is missing in the greater programming community. Only the intelligent few seem to have this ability, while the remainder are nothing more than Cargo Cult programmers who are going through the motions and assuming that what they are doing actually works as intended because they are incorporating all the right buzzwords and jumping on the same bandwagon as all the other lemmings.

As you should be able to see, if I moved some methods to another class then I would be decreasing cohesion and increasing coupling, and as far as I am concerned that cannot be justified under ANY circumstances.

SRP makes no mention of size, so I regard any such limitation as artificial and feel no obligation to be bound by it.

In this post he said the following:

And nope, you don't understand SOLID principles. You don't even understand SRP, or maybe you understand SRP but breaks it anyway. All the principles exist for good reasons, because it makes development easier, faster and more cost-effective.

This is where I have to strongly disagree. I have read many articles which supposedly show how a particular principle can be used, but all I see is the extra code that needs to be written for no apparent benefit. When I say "no apparent benefit" I mean that that the code still does what it did before the principle was applied, but with more code, with more levels of indirection. More code means longer to write, longer to run, longer to read and therefore more difficult to understand and maintain. When you can show me a principle which results in less code being written I will sit up and take notice, but until then I shall treat it as a waste of time.

In my long career in software development I have come across many ideas put forward by many people as being the silver bullet that will solve all the current problems, but the biggest problem with these ideas is that they are usually badly written, are too vague and imprecise, and are open to interpretation and therefore mis-interpretation. The levels of mis-interpretation can vary between "extreme" and "perverse". The first big problem is therefore which interpretation do you choose? Is it the "moderate" or "extreme" version? When do you apply the idea? When do you stop applying the idea? This leads me to the following observation:

There are two ways in which an idea can be implemented - intelligently or indiscriminately.

Those who apply an idea or principle indiscriminately, who apply it in inappropriate circumstances, or who don't know when to stop applying it, are announcing to the world that they do not have the brain power to make an informed decision. They simply do it without thinking as they assume that someone else, namely the person who invented that principle, has already done all the necessary thinking for them. This leads to a legion of Cargo Cult programmers, copycats, code monkeys and buzzword programmers who are incapable of generating an original thought.

This is a great mistake, and one that I learned NOT to make many years ago. I will only implement those ideas that have proven, in my personal experience, to be beneficial or have merit. This has led me to ignore a large number of ideas which have been approved by the Paradigm Police/OO Taliban and to follow instead an older, more mature set of ideas which, due to their unfashionable nature, have been described as "unorthodox" at best or "heretical" at worst.

When it comes implementing a solution, and there is choice between two alternatives - one simple, one complex - I will always follow the KISS principle and stick with the simplest solution. This follows on from the following statement made by C.A.R. Hoare:

There are two ways of constructing a software design. One way is to make it so simple that there are obviously no deficiencies. And the other way is to make it so complicated that there are no obvious deficiencies.

I have expanded this statement into the following:

Any idiot can write complex code than only a genius can understand. A true genius can write simple code that any idiot can understand.

The mark of genius is to achieve complex things in a simple manner, not to achieve simple things in a complex manner.

Despite the fact that I have been using my heretical approach for over ten years there are people out there who dismiss it out of hand as being rubbish, not because they have examined it and compared it with their own implementation, but because they have been told that I do not follow the "right" OO standards therefore everything I do must surely be wrong. Being wrong it must be bad, and being bad it must exhibit the characteristics of bad software such as being unreadable, difficult to maintain, take longer to write, longer to debug, et cetera, et cetera. This is a premise which leads to a conclusion. I shall now demonstrate that the conclusion is totally wrong which therefore proves that the original premise must also be wrong.

The fundamental point of my technique is that it enables me to create basic components to maintain the contents of database tables WITHOUT WRITING ANY CODE WHATSOEVER. This is in direct contrast to the volumes of code that need to be written just to comply with those academic yet impractical principles that are so loved by the Paradigm Police and the OO Taliban.

Throughout the exchange of views between myself and Hall of Famer in What's Wrong With Object-Oriented Programming? and also MVC vs. OOP he has continuously proved that he cannot understand simple concepts and keeps on insisting that any opinion which is different from his is automatically wrong. He is operating under the belief that if he keeps repeating the same lies over and over again that eventually they will be perceived as the truth. By constantly casting aspersions on my competency as a programmer, the quality of my work, and even the competency of my customers, he is guilty of gaslighting, but his words will never convince me that I am wrong for the simple reason that my code works, and any engineer, software or otherwise, will tell you that something that works cannot be wrong. Not only do my methods work, they actually produce results which are are more cost-effective than his simply because I can produce working components at a much faster rate that he can, and everyone knows that faster means cheaper. My framework also provides many features which are automatically available to every component "out of the box" without the need for additional coding.

An example of his fuzzy thinking can be found in this post in which he states:

If you are a more intelligent programmer, you'd find that interfaces drastically improve your efficiency

Excuse me, but how can taking the time to write code that contributes absolutely nothing to the application be called "efficient"? If you look at Object Interfaces you will see that in PHP they are a solution to a problem encountered in statically typed languages which does exist in dynamically typed languages such as PHP. They are a solution to a problem which does not exist, which makes them totally redundant and a violation of the YAGNI principle. To my mind the writing of code which does nothing is not "efficient", it is a total waste of time and should be avoided at all costs.

In the very next sentence he said this:

And nope, my definition of efficiency is correct, by efficiency I mean the ability to write more code with higher quality.

I'm sorry, but your definition is a crock of sh*t. The following are correct definitions:

- Efficiency is the (often measurable) ability to avoid wasting materials, energy, efforts, money, and time in doing something or in producing a desired result. In a more general sense, it is the ability to do things well, successfully, and without waste.

- Efficiency is a measurable concept, quantitatively determined by the ratio of useful output to total input.

- effective operation as measured by a comparison of production with cost (as in energy, time, and money)

- the ratio of the useful energy delivered by a dynamic system to the energy supplied to it

- the good use of time and energy in a way that does not waste any

- the difference between the amount of energy that is put into a machine in the form of fuel, effort, etc. and the amount that comes out of it in the form of movement

The comparison of what is actually produced or performed with what can be achieved with the same consumption of resources (money, time, labor, etc.). It is an important factor in determination of productivity.

- the state or quality of being efficient, or able to accomplish something with the least waste of time and effort; competency in performance

- accomplishment of or ability to accomplish a job with a minimum expenditure of time and effort

- the ratio of the work done or energy developed by a machine, engine, etc., to the energy supplied to it, usually expressed as a percentage

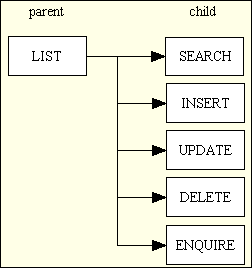

As you can see from above the term "efficiency" has nothing to do with writing more code with higher quality. "More" is not a factor. "Quality" is not a factor. "Less" is a factor. "Effort" is a factor. It is the ability to achieve a particular result with the minimum of effort and the minimum of waste. As an example, if it takes me 5 minutes to create the family of forms shown in Figure 2, but it takes you 5 hours, then clearly my method requires less effort and less time, which automatically makes it more efficient and more productive. If you think that my estimate of 5 hours is inaccurate then take my challenge and provide me with an actual figure.

Figure 2 - A typical Family of Forms

In this post he said:

Its time to stop labeling yourself as productive. You've been developing your legacy Radicore for longer than a decade, and no significant improvement has been made thus far. You call that productive?

....

You are not productive, your definition of productivity is flawed because you are just writing more code with longer time, but you aint writing more code in a given unit of time.

I'm sorry, but your definition is a crock of sh*t. The following are correct definitions:

Productivity describes various measures of the efficiency of production. A productivity measure is expressed as the ratio of output to inputs used in a production process, i.e. output per unit of input.

The effectiveness of productive effort, especially in industry, as measured in terms of the rate of output per unit of input.

the rate at which goods are produced or work is completed

A measure of the efficiency of a person, machine, factory, system, etc., in converting inputs into useful outputs.

As you can see from above the term "productivity" has nothing to do with improving anything. "Improvement" is not a factor. "Output" is a factor. "Unit of Input" is a factor. It is the ratio of output to each unit of input. It is the ability to produce more with the same resources. As an example, if it takes you 5 hours to create the family of forms shown in Figure 2, but my method allows me to do it in 5 minutes, then clearly in the same amount of time I can produce more, which automatically makes my method more efficient and more productive. If you think that my estimate of 5 hours is inaccurate then take my challenge and provide me with an actual figure.

Productivity is not about writing more code in a given unit of time, it is about producing a result by writing less code, and the less code you have to write the less time it takes to write it. This can be achieved by reusing code that has already been written. My framework has more reusable code than yours, which means that I have less code to write in order to achieve a result. Because I have less code to write I can achieve the result in less time, and this makes me more productive and more efficient.

My framework is not an application in its own right, it is a toolkit for building database applications. It allows me to very quickly build components which perform various actions on database tables. I have used this framework to build an ERP application which currently has 40 database tables and 4,000 tasks (user transactions), and due to the amount of code which I did not have to write I did this at a faster rate than you could possibly achieve with your framework. I can produce the same result with less effort, which means that with the same effort I can produce more. Productivity is directly related to efficiency, it has nothing to do with improvement.

Whenever a module calls another module the two modules are said to be coupled. This coupling can either be tight or loose. Loose coupling is better.

Tightly coupled systems tend to exhibit the following developmental characteristics, which are often seen as disadvantages:

- A change in one module usually forces a ripple effect of changes in other modules.

- Assembly of modules might require more effort and/or time due to the increased inter-module dependency.

- A particular module might be harder to reuse and/or test because dependent modules must be included.

The degree of loose coupling can be measured by noting the number of changes in data elements that could occur in the sending or receiving systems and determining if the computers would still continue communicating correctly. These changes include items such as:

- Adding new data elements to messages

- Changing the order of data elements

- Changing the names of data elements

- Changing the structures of data elements

- Omitting data elements

Polymorphism - the ability to substitute one class for another. This requires multiple classes to support the same method signature.

Singleton - restricts the instantiation of a class to one object. Multiple requests for an object of the same class will return the same object.

In this post he wrote:

Loose coupling has a lot to do with the ability to swap implementations

I disagree. The ability to swap implementations is called polymorphism which allows you to swap one object for another. Coupling is about the method signature which is used when one object calls another. Loose coupling helps to avoid the ripple effect when you need to make a change in the data elements which are passed from one object to another. Loose coupling and polymorphism are NOT related as it is possible to have one without the other, but the more loose coupling you have the more instances of polymorphism you may provide.

In my framework I can add or remove a column from a database table and I NEVER have to change ANY method signatures in ANY of my Models, Views, Controllers or DAOs. I do not have to change ANY properties, and I do not have to change ANY getters or setters. Compare this example of tight coupling with this example of loose coupling and you will see the difference.

In this post he wrote:

When you use Singleton, your class is tightly coupled to the concrete singleton class, it removes possibility for polymorphism and makes it impossible for you to swap to a different implementation for purposes such as testing. DI is not loose-coupling itself, but it does offer a solution to your tight coupling problem

I disagree. A singleton is about obtaining a shared instance of an object and has nothing to do with method signatures. Loose coupling is about changing the data elements which are passed between objects without having to change any method signatures. If you are getting poor results from your singletons then it your implementation of that pattern which is at fault. Instead of having a separate getInstance() method inside each class I have a static method inside a single singleton class, as explained in Singletons are NOT evil.

Singletons do not remove the possibility of polymorphism as I can create an instance of any of my 400 concrete Model classes using my singleton class, and as each of these Model classes inherits its methods from the same abstract class every one of those methods is available via polymorphism.

I do not have any tight coupling in my framework. No Controller is tightly coupled to a single Model, no Model is tightly coupled to a single Controller, no View is tightly coupled to a single Model, and no Model is tightly coupled to a single View. Each Model can be used with any Controller and any View.

It is a fallacy that Dependency Injection (DI) automatically provides loose coupling. DI is all about where the dependent object is identified/instantiated, it has nothing to do with the construction of objects or the use of any method signatures.

In this post he wrote:

Polymorphism indeed has something to do with coupling, because loosely coupled application usually has a lot of polymorphism. With Singletons, you kill polymorphism since its impossible to swap implementation of this class.

I disagree. Polymorphism indicates coupling, but is not an indicator of loose coupling. It is possible to have polymorphism without loose coupling, and it is possible to have loose coupling without polymorphism.

If your use of singletons prevents the provision of polymorphism then it is your use of singletons which is at fault. In my framework I do *NOT* have a duplicate getInstance() method inside every class, I have that method inside a separate singleton class. This means that I can call my getInstance() method on *ANY* class in my application, whether it be a superclass or a subclass, and it will give me an instance of that class.

In this post he wrote:

How can you have more polymorphism when you have so much tight coupling with Singleton

Firstly, the idea that you cannot have polymorphism with a singleton is wrong. Take the following code as an example:

<?php $class_name = "foo"; $object = singleton::getInstance($class_name): $result = $object->getData(); ?>

Here I can change the contents of the variable $class_name, obtain a singleton of the associated object, then call a method on that object without any problem whatsoever. I have been doing just that in my framework since 2005, so don't tell me it can't be done.

Secondly, I only use singletons when I have a dependency in my Model classes such as the validation object or the data access object. I do not require any polymorphism here as there is only ever a single implementation. Where I do make use of DI is when I inject a Model into a Controller or when I inject a Model into a View.

He is also very good at inventing crazy new rules just to reinforce his pathetic arguments. In this post he said the following:

I told you already, an abstract class should provide a bare minimum of functionality that all child classes find useful. If your abstract class contains methods that only 1-2 child classes actually need, then you are doing it very wrong. You clearly don't even understand why it is called 'abstract' in the first place, your implementation of a 'know-all' Default_Table class is exactly the opposite of how abstract classes should look like.

Your opinion on how abstract classes should look like is inferior to the description found in Designing Reusable Classes which was published in 1988 by Ralph E. Johnson & Brian Foote.

I have never seen the definition of an abstract class which says that it should provide only the bare minimum of functionality and that every method that it contains MUST be used by every one of its child classes. Inherited, yes. Available, yes. Used, no. In my framework the abstract table class exists in the Model/Business layer and is inherited by every one of my concrete table classes, of which there are currently 400. The abstract class provides all the methods (which may be either abstract or non-abstract) which are called by any of my 45 reusable Controllers, where there is a separate controller for each of my Transaction Patterns. This means that these methods do not have to be implemented by any developer as the implementations are inherited from a single shared source. These methods provide the basic and common functionality, but this standard behaviour can be enhanced or overridden in any table class by placing code in the relevant customisable methods. This arrangement means that once I have created a concrete table class I can generate a new task from any transaction pattern, and that task will be able to run immediately with default behaviour without the need for any coding by any developer. If the default behaviour needs to be changed then the developer puts the relevant code into the relevant customisable method. The method in the child class then overrides the method in the abstract class.

Note that my abstract class does not contain any abstract methods, so none of these methods needs to be defined with an implementation in the inheriting class. The only time that a method in the abstract class needs to be implemented in an inheriting class is when that implementation needs to be modified. It is therefore incorrect to say that any of my concrete classes contains methods that it does not actually use. When the class is instantiated into an object, that object may contain inherited methods that are not used, but so what? What problem does this cause? If it does not cause a problem then why does it need a solution? I certainly will not be implementing your solution as it would actually create problems where none existed previously.

It is true to say that not every method needs to be used by every controller, but there is no rule that says that it should. It is common practice and therefore perfectly acceptable for different controllers to access the same object using different subsets of the methods which are available. Where I have 45 different controllers which implement my 45 different Transaction Patterns, there is no rule which says that I MUST implement every one of those 45 patterns for every child class. For example, I have some methods which are only used by the File Upload, Output to CSV or Output to PDF patterns, but each of these patterns is only implemented for a small number of classes. The methods used by those patterns are available for use in every child class, but they may not actually be used simply because that pattern is not (yet) implemented with that child class. So what? Does this cause a problem? Does this cause unexpected behaviour? Does this require any extra effort from the developer to deal with the fallout? If the answer to all these questions is "No", then what exactly is the issue? What is wrong? If the only thing that happens is that it makes you unhappy then my only comment is the Anglo-Saxon equivalent of "Go Forth and Multiply!"

In this post he said:

If some child classes have no use for methods such as file uploading, then the data and behaviors associated with file uploading should belong somewhere else. It is irrelevant, because from the aspect of your child classes that do not support file uploading, these fields/methods are useless.

....

from the subclass of Default_Table point of view, it may or may not need functionality for file uploading and pdf/csv handling. In this case, those child classes without needs for such functionality should not have those redundant logic at all, but by inheriting your God class they have to receive those fields/methods that shouldn't belong to them. You are breaking encapsulation and achieving low cohesion by putting unrelated fields/methods into the same class.

Excuse me, but having an inherited method in a child class which is not actually used does not break encapsulation or lower cohesion. If there is a method in the abstract class which is not used then it is not defined in concrete class.

In this post he wrote:

So if the methods are not used by certain child classes, it means the child classes should not inherit this method

Excuse me, but when you inherit from an abstract class you automatically inherit ALL the properties and methods from that abstract class. There is no mechanism in any OO language which allows you to pick and choose subsets to inherit, so it is unacceptable for you to invent a rule for which no mechanism exists.

In this post he started to contradict himself:

I already explained again and again to you, that an abstract class is meant to provide functionality needed by all or most child classes, not functionality that only one or two child classes will find useful.

By changing his wording from all to all or most he has opened the door to admitting that not every method in the abstract class need actually be used in every child class. The words "not all" need not be interpreted as "most" as either "some" or "at least one" are perfectly valid. Provided that a method in a child class is used by at least one pattern/controller, then that method has a perfect right to exist in the abstract class so that it is available should that pattern ever be implemented within a child class.

In this post he changed his definition yet again:

The definition of 'abstract class' doesn't say all of its methods should be useful by every child class, but the definitions of cohesion and encapsulation say this. You have no way to achieve high cohesion and proper encapsulation if your child classes inherit methods that don't belong to them at all

The fact that some out of 400 child classes inherit methods which are not actually used is irrelevant. The abstract table class is filled with methods which may be used by any of the concrete table classes depending on which Transaction Patterns are used with that concrete class. There is no way to "uninherit" any unused methods, nor to inherit only that subset of the available methods that you actually need. An unused method does not cause a problem in the real world as it exists only in the abstract class and is never overridden in the concrete class, so if there is no problem then why do you keep insisting that there is?

Perhaps his confusion over my use of an abstract class lies in the fact that he believes that every method in an abstract class must automatically be an abstract method, in which case every one of these methods would need to be copied into every subclass. Being forced to define a method in a subclass which was not actually used would therefore NOT be a good idea. However, that is not how abstract classes work. This is what the PHP manual says:

PHP 5 introduces abstract classes and methods. Classes defined as abstract may not be instantiated, and any class that contains at least one abstract method must also be abstract. Methods defined as abstract simply declare the method's signature - they cannot define the implementation.

Note here that it says that methods within an abstract class may be defined as abstract methods, but they need not be, in which case they can provide an implementation. This means that if you inherit from an abstract class, and that abstract class contains any abstract methods, then you MUST define each of those methods in your concrete class so that you can define the implementation for each of those methods. On the other hand, if the abstract class contains any non-abstract methods, then none of those methods need be defined in any concrete class at all. The only time that you need to copy the signature for a non-abstract method from the parent class into your concrete class is when you want to override that method's implementation. If you do not override it, then the original implementation in the parent class will be used by default. If you do override a method then the parent implementation will not be executed unless you explicitly do so with a line of code which uses the Scope Resolution Operator.

The Gang of Four book on Design Patterns has this to say about abstract classes when describing a method of using class inheritance which avoids the side-effects which arise from their over use:

One cure for this [problematic implementation dependencies] is to inherit only from abstract classes, since they usually provide little or no implementation.

The phrase "since they usually provide little or no implementation" tells me that most programmers are failing to realise the full potential of abstract classes by having little or no code which can be reused. This totally negates the benefit of using OOP in the first place, which is supposed to increase the amount of reusable code and thereby reduce maintenance. This tells me that most programmers are failing to identify the correct abstractions in their software. I design and build nothing but enterprise applications which deal with large volumes of data concerning numerous different entities, where this data is stored in tables in a relational database, one table per entity. The application therefore does NOT interface with objects in the real world, it interfaces with tables in a database which contain information on those objects. I am constantly being told that I am not practicing OOD correctly, but when I ask the "is-a" and "has-a" questions which are supposed to be the backbone of OOD I come up with the following answers:

This then leads me to the following observations which are blindingly obvious to me, but which almost everybody else seems to miss:

I am constantly being told that "proper" OO developers DO NOT have a separate class for each database table, but their logic is so full of holes I wonder how they can possibly write software which is cost effective or even workable. If you look at Having a separate class for each database table is not good OO you will see the following statement made by a so-called OO guru:

Classes are supposed to represent abstract concepts. The concept of a table is abstract. A given SQL table is not, it's an object in the world.

If the author of that statement actually understood what he wrote he would see the following:

Template methods are a fundamental technique for code reuse. They are particularly important in class libraries because they are the means for factoring out common behaviour.

I firmly believe that I am using the features of OO in the way that they were designed to be used, with fewer side-effects, and producing large amounts of reusable code, so all my critics who are barking up the wrong tree.

In any engineering discipline there are laws that must be followed otherwise your project will fail. There are other things called "rules" or "guidelines" which have no effect on the success or failure of the project, but which are added to please the bureaucrats so that they can tick boxes on pieces of paper. For example, when building an aeroplane you must obey the laws of aerodynamics otherwise your plane will not get off the ground. The fact that a bureaucrat has a piece of paper on which all the boxes have been ticked will be irrelevant. For example, there may be rules which state how an aircraft should be built, what materials should be used, what shape it should be, et cetera, but these are far less important than the laws of aerodynamics. You may build a plane that ticks all the bureaucrat's boxes, but if it doesn't fly it is a failure.

If an innovator comes along with a different approach which allows aircraft to be built faster and cheaper by utilising different techniques, different materials and/or different shapes, the only important factor in the eyes of the paying customers is "Does it fly?" If it happens to be cheaper than his competitors then the innovator will take business away from them. In such circumstances it would be pretty pointless for the competitors to complain that the innovator is breaking the rules. The definition of "innovate" is Make changes in something established, especially by introducing new methods, ideas, or products

. Innovation requires change, and this sometimes means throwing out the current rule book and starting again with a new set of rules.

If I break an engineering law and something bad happens, then the fault is mine. My plane won't fly, my boat won't float, my bridge will fall down, my software won't work as expected. If I break a bureaucratic rule and nothing bad happens, then that rule has no practical purpose and its existence can be questioned. On the other hand the breaking of a bureaucratic rule may result in something pleasant, such as better performance, quicker delivery or lower costs. The fact that a bureaucrat is upset because he can no longer tick that box on his sheet of paper becomes irrelevant.

There are very few "laws" in the world of software engineering. Personally I can see only three:

When writing database applications for the web I am constrained by the following:

When it comes to programming style there is no "one size fits all" approach such as that being demanded by Hall of Famer. The only "rule" that I have followed in all the languages that I have used is based on the following statement from Abelson and Sussman in their book Structure and Interpretation of Computer Programs which was first published in 1985:

Programs must be written for people to read, and only incidentally for machines to execute.

This requires the use of meaningful data names, meaningful procedure names, a structure that can be shown in a diagram, and logic that can be easily understood. Along the way I have adopted other principles such as KISS, DRY and YAGNI, but anything else I see as a passing fancy and not a universal law that must be obeyed without question. In order to write effective software I have to obey the laws of software engineering, but standards and best practices are not laws, they are guidelines. As a pragmatic programmer I will only follow those guidelines which have proved their worth in my 30+ years of programming experience. As Petri Kainulainen says in his article We Should Not Make (or Enforce) Decisions We Cannot Justify I have the right to question any rule in order to evaluate its actual worth. Any guideline which stands in my way will be brushed aside, and provided that my paying customers are impressed with the result I couldn't care less about upsetting the delicate sensibilities of some petty bureaucrat.

Fundamental - forming the base, from which everything else develops

Evolve - to gradually change over time

In this post I made the following statement:

OOP consists of nothing more than encapsulation, inheritance and polymorphism, and everything else is an optional extra.

He replied with the following

those are NOT add-on definitions of OOP, they are fundamental and universally agreed concepts.

I pointed out to him that the fundamental or original definition of OO was made by Alan Kay who invented the term where he stated that OO consists of nothing more than encapsulation, inheritance and polymorphism, so anything added after that is an optional extra. There are now many different languages which implement OO theory in different ways, into which different people have added in their own ideas of how OO could be "improved", but all these ideas were later additions and not included in the original definition of OO.

Logic - program code which performs a function

Information - data which is processed by code

In this post he made this statement:

When a class has 9000 lines, it is guaranteed to have more than one responsibility

I tried to explain that my framework had been separated into an adequate number of components by virtue of the fact that it implemented a combination of both the 3-Tier Architecture and Model-View-Controller design pattern. As my so-called "God" class contains logic which is restricted to operations performed in or by the Model, and contains no logic which rightly belongs in a Controller, View or DAO, it does not break SRP at all. I asked him to point out any logic in my abstract class which should be in one of the other components, and in this post he answered with:

I stopped reading once I found file uploading logic/responsibility in that class

He made the same accusation in this post, to which I replied with:

No table class performs any file uploading as that is done within the Controller. It merely has methods which supply the destination directory and file name, plus a post-upload method which allows for additional business rules to be specified after the upload has been completed.

In this post he continued his argument with the following:

Even though the actual file uploading is done in your controller class, your god class does contain File uploading logic. Its not just a courier of information, it actually processes this information.

Hall of Famer clearly does not understand the difference between logic and information, that having a method in a Model which passes information/data back to the Controller which performs the file upload is not the same as having logic/code within the Model that performs the file upload. The Model passes the data to the Controller, and it is only the Controller which contains the logic/code which processes this data.

Hall of Famer seems to have the notion that just because a feature exists in the language then it should be used, or if a programming principle has been created by a supposed "mastermind" then it should be followed. Intelligent people know different. Unlike a mountain climber whose justification for climbing a particular mountain is "Because it is there!", in computer programming you only use a particular feature of the language, a particular function, or particular syntax when it is helps you achieve the objectives of the program or library which you are writing. I have used many languages in my long career, and I have rarely used more than 50% of the features in any of those languages. Why not? Because I had no use for them. Just because they are useless to me does not mean that they are also useless to everybody else.

Although I could use a new feature that has been added to the language it is not the same as should. Sometimes a feature is added to provide coverage for a topic that was not covered previously, but sometimes it is added just to provide a different way of doing something that can already be done. Each time a see a new feature in the language I ask myself some simple questions:

If I don't have the problem that a feature was meant to solve then I have no use for that feature. This includes namespaces.

If the cost of changing existing code to do the same thing, but in a different way, is greater than the benefits that would be provided, then use of that feature cannot be justified. I would put autoloaders and short array syntax in this category.

If a feature was designed to solve a problem in the language that no longer exists, such as object interfaces, then that feature is dead and should be removed. Object interfaces did not exist in PHP 4 as they were not necessary. They were only added in PHP 5 because a vociferous (i.e. loud mouthed) group of developers used the pathetic argument that "interfaces exist in other OO languages, so they should be in PHP as well".

Structured programming is a programming paradigm aimed at improving the clarity, quality, and development time of a computer program by making extensive use of subroutines, block structures, for and while loops - in contrast to using simple tests and jumps such as the go to statement which could lead to "spaghetti code" causing difficulty to both follow and maintain.

Monolithic System - A software system is called "monolithic" if it has a monolithic architecture, in which functionally distinguishable aspects (for example data input and output, data processing, error handling, and the user interface) are all interwoven, rather than containing architecturally separate components.

Monolithic Application - describes a single-tiered software application in which the user interface and data access code are combined into a single program from a single platform. It is designed without modularity. Modularity is desirable, in general, as it supports reuse of parts of the application logic and also facilitates maintenance by allowing repair or replacement of parts of the application without requiring wholesale replacement.

Spaghetti code is a pejorative phrase for source code that has a complex and tangled control structure, especially one using many GOTO statements, exceptions, threads, or other "unstructured" branching constructs. It is named such because program flow is conceptually like a bowl of spaghetti, i.e. twisted and tangled. Spaghetti code can be caused by several factors, such as continuous modifications by several people with different programming styles over a long life cycle. Structured programming greatly decreases the incidence of spaghetti code.

In this post he made the following statement:

Yes it is possible to write well-structured Java and COBOL code, I never said you cannot. However its impossible to write well-structured procedural code. Procedural code can be structured, but only badly structured. Writing procedural Java code, then its badly structured. Writing COBOL code with OO design, then its well structured.

You should see here that he is contradicting himself: